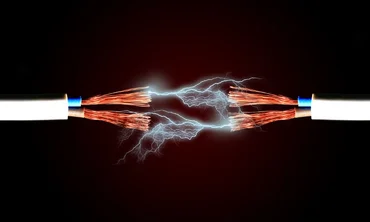

AI infrastructure - OpenAI slamming the brakes on UK data center plans sparks electrifying debate around the AI energy bill

- Summary:

- Who pays the electricity bill in the end?

Remember back in September last year when OpenAI announced Stargate UK, a major data center project at Cobalt Park in North Tyneside, part of a broader £31 billion tech investment package? It was going to deploy up to 8,000 GPUs initially, scaling to 31,000 and the UK Government punched the air in excitement at what it saw as an endorsement of its AI powerhouse economy ambitions.

Flash forward to this month and OpenAI slammed the brakes on, blaming the cost of energy and regulation, both leading back to the door of that same government. In particular, the administration’s green targets and Net Zero ambitions are being cited by critics on the back of the US firm’s new announcement.

Whatever the case, the bottom line is simple enough - OpenAI are not building in the world’s third largest AI economy in the way it said it would a few months ago because it doesn’t like the look of the electricity bill it would receive!

Now, critics of OpenAI - there are one or two around! - might counter that this might actually be another example of the young firm being ‘flexible’ with its decisions and policies. Sora, anyone? Let’s ask Disney about that one...

Too expensive

But it is undeniable that the UK has the highest industrial electricity prices across the member states of the International Energy Agency (IEA), more than four times that of the US, Finland, Norway, and Sweden. So the UK is undeniably one of the more expensive places to do AI work from that point of view.

Add to that the shortfall in established infrastructure - the UK's largest data center runs at 120 megawatts; current AI development plans start at 500 megawatts and could rise to a gigawatt without breaking sweat.

Ofgem, the UK energy regulator, has warned that data center demand for AI goals will require more energy than is currently used by the whole country. Ofgem finds there are currently around 140 proposed data center schemes, each of which would want at least 50 gigawatts of electricity, exceeding the entire country’s current peak demand.

So, build more UK data centers! Good idea - tell that to local lobby groups protesting the environmental and climate impact of the type of massive building work that would entail. The process already takes a long time in the UK - up to two years to build a data center, another three, at least, to get connected to the grid - and that’s without planning objections, injunctions, public inquiries etc etc.

So, another option might be to move your AI ambitions elsewhere. Research house Oxford Economics has stated its view that hyperscalers may indeed look to the likes of the Nordics in search of lower energy costs, a threat to the GDP of both the UK and the European Union (EU):

A US-style AI investment bonanza is unlikely in the EU because of planning and energy capacity constraints, though these are also likely to lead to greater diversification of data centre locations. The Nordics and parts of South Europe are attractive alternatives to the traditional Frankfurt, London, Amsterdam, Paris and Dublin cluster.

More and more demand

And all of this is happening against a backdrop of ever-increasing demand to power the AI industry. The International Energy Agency (IEA), which explores AI's growing energy footprint and options for meeting data centre power demand, just published data showing that electricity consumption from AI data centers rose by 50% in 2025, and is on track to double by 2030.

In the Key Questions on Energy and AI report, the IEA notes that CapEx from the five largest tech firms in the AI space rose to $400 million in 2025. Much to the horror of the Red Braces ‘show us the money’ brigade on Wall Street, that’s a trend that that’s going to continue for a long time to come with predictions of a 75% increase this year alone.

That said, the IEA has good and bad news. The former:

Energy consumption per AI query has declined massively, but much more energy-intensive use cases are becoming increasingly popular Measured per individual task, the energy efficiency of AI is improving at a rate unprecedented in energy history. Software and hardware advances have resulted in the energy use per AI task dropping by at least an order of magnitude annually in recent years. Simple text queries now typically consume less electricity than running a television over the same period of time. If all conventional internet searches were performed with simple AI text queries, it would consume less than 4 terawatt-hours (TWh) of electricity annually, equivalent to less than 1% of total data centre consumption today.

But along comes agentic AI and all bets are back off:

New energy-intensive AI applications are increasingly being launched and used, such as those for video generation, reasoning and agentic tasks. These kinds of tasks can consume hundreds or thousands of times more energy per query than simple text generation. The energy demand of AI is therefore the result of three rapidly evolving and uncertain trends: improvements in efficiency, surging uptake, and changing model capabilities, which can unlock new and, in many instances, far more energy-intensive use cases. To improve the robustness of the outlook for AI’s energy demand, close monitoring, frequent updates and cooperation with the tech sector, including more systematic energy consumption disclosures, will remain important.”

Who pays?

But someone’s got to pay the bill at the end of the day. The danger many fear is it will be the poor consumer who ends up with higher monthly bills, although this is an outcome that would be politically difficult to sell to the electorate. In the US, with the mid-terms looming, Trump 2.0 has paraded tech firms in front of the cameras to solemnly swear that they will shoulder the costs to power AI data center expansions., signing up to a "ratepayer protection pledge”.

Seven leading tech firms - Google, Microsoft, Meta, Oracle, xAI, OpenAI and Amazon - initially signed on to the pledge, which Washington insists will help keep utility bills down “very substantially” for the US consumer. It’s good optics, but in practice the enforceability of this voluntary code of conduct is harder to guarantee. For example, enforcement would depend on state utility commissions and contract terms.

But there are clear examples of tech giants looking to ensure that they do boost their own energy making and consumption capabilities. For example, Oracle recently announced an expanded fuel-cell power agreement with Bloom Energy that will supply up to 2.8 gigawatts of on-site clean electricity to support its AI infrastructure.

Specializing in solid oxide fuel cells, Bloom Energy works via localized power systems that can be deployed faster than traditional power plants or grid expansion, reducing the time it will take for the likes of Oracle to reap the benefits of the connection. The firm has reported AI demand outstripping supply for multiple quarters so ramping up infrastructure is a critical factor.

That’s a common story across the AI sector and places companies like Bloom in a good competitive position in relation to traditional utility providers. As CEO K. Sridhar explained recently:

Our growth has been fueled by seismic changes in customer attitudes towards power. Bring your own power has become the mantra for data centers and power hungry factories. On-site power has moved from being a decision of last resort to a vital business necessity. This shift has led large power users to seek Bloom to fulfill their needs. Our demand from data center and commercial and industrial or C&I customers is secular and growing.

The firm has what he calls a “clear and simple promise” to customers with large time-to-power needs:

Bloom will not be the bottleneck to your growth, and you can count on us to deliver timely power. We will deliver our power platform faster than you can build your greenfield facilities, be it an AI factory or a C&I (commercial and industrial) facility. We demonstrated this recently by delivering a hyperscale AI factory order in 55 days against a 90-day commitment and power for a large factory before they could complete construction and commence operation.

That is quick time to power, the Bloom way. In short, we will continue to expand deliberately and with discipline, at a fraction of the cost and time it will take traditional legacy vendors...Bloom brings very clear competitive advantages that legacy providers that built their technology for the industrial age cannot adapt to.

My take

What’s electrifying (sorry) about the current energy ‘arms race’ around AI is that grid capacity has so quickly evolved from being a commodity proposition, taken for granted, to a sought-after ‘Holy Grail’, the elusive nature of which has actually become a constraint to the sector. What we once saw as basic utilities are now, in theory, critical economic infrastructure once more.