Kubernetes puts ingress nginx to rest at KubeCon - 'Nobody can keep it safe'

- Summary:

- Kubernetes formally archived one of its most widely deployed components on day one of KubeCon Europe 2026. Steering committee member Kat Cosgrove explains why the project's own flexibility became its fatal flaw - and why anyone still running it should be treating migration as an emergency.

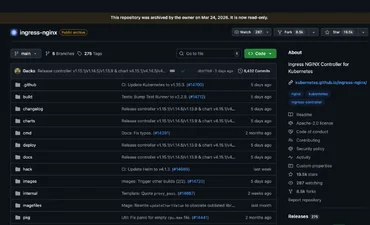

On the morning of 24 March, as KubeCon Europe opened in Amsterdam to its largest ever audience, the Kubernetes project archived ingress nginx. The GitHub repository is now read-only. There will be no more releases, no bug fixes, and no security patches for a component – the gateway that manages how external traffic reaches applications running on Kubernetes clusters – that approximately 50% of cloud native environments rely on.

Kat Cosgrove, a member of the Kubernetes Steering Committee and Head of Developer Advocacy at Minimus, takes point on communications for the shutdown process. Sitting down with her at KubeCon Europe on the day the archival went live, she is characteristically direct:

It is closed. Now it is dead.

The Steering Committee and Security Response Committee (SRC) jointly defined the shutdown timeline at KubeCon North America in Atlanta last year, but as Cosgrove points out, the writing had been on the wall for considerably longer:

It was clear that the project was in trouble two and a half years ago. So I think we've been as lenient as we can afford to be.

A flexibility problem that became a security crisis

Ingress nginx was built early in Kubernetes history as an example implementation of the Ingress Application Programming Interface (API). It became enormously popular because it was so flexible – a vendor-agnostic ingress controller that could be bent to fit almost any use case. That flexibility is precisely what makes it unmaintainable today.

Its fundamental architecture, like how flexible it is, makes it easy to exploit. And because of what it is – an ingress controller – if you are able to exploit it, it's very, very dangerous.

The security track record tells the story. In March 2025, the IngressNightmare vulnerabilities were disclosed – a batch of Common Vulnerabilities and Exposures (CVE) entries, the most severe of which, CVE-2025-1974, carried a Common Vulnerability Scoring System (CVSS) score of 9.8 and enabled unauthenticated remote code execution that could lead to full cluster takeover. Wiz Research estimated around 43% of cloud environments were vulnerable. And that was just one batch – severe CVEs had been relentless, with the project maintained by one or two volunteers in their free time.

Cosgrove is blunt about the structural impossibility of continuing:

It doesn't matter how many people you put on it. Its fundamental architecture is now a problem, and it's like years of technical debt. Nobody can keep it safe.

She goes further, noting that even significant corporate backing would not have changed the outcome:

If a hyperscaler had offered us a dedicated engineering team to maintain ingress nginx, we still would have just used that team to shut it down.

The undisclosed vulnerability question

For organizations still running ingress nginx, the situation is now acute. Existing installations will continue to function – and that is exactly the problem. Unless teams proactively check, they may not discover they are exposed until a compromise occurs. Cosgrove warns:

I should be incredibly shocked if there wasn't already somebody sitting on a nasty remote code execution vulnerability that hasn't been disclosed yet, and they're just waiting to drop it. We've archived it now so there are no more updates. It doesn't matter if somebody drops a CVSS nine or a ten, there's not going to be a patch and there's not going to be a new release.

She is equally skeptical about corporate forks as a lifeline:

I think it is folly to rely on that. I think it's dangerous.

The recommended migration path is Gateway API, which offers more expressive routing and better multi-tenant support. Several third-party ingress controllers also remain actively maintained. But none are drop-in replacements, and for complex environments the migration effort is substantial.

Lessons from dockershim – and a media problem

Cosgrove has form when it comes to communicating major Kubernetes transitions. Her first contribution to the project was helping to manage communications around the dockershim deprecation in 2020, when the project's own "Don't Panic" blog post had to calm a wave of misplaced fear that Kubernetes was dropping Docker entirely. That experience changed how the project handles major changes. She explains:

We forgot how large the project is and how mature everybody else sees the project as being. We assumed that everybody understood as much about the history of Kubernetes and Docker's relationship to Kubernetes as we do, and that just fundamentally isn't true.

The project now follows a standard playbook: plain-language explanation first, detailed technical guidance second. With ingress nginx, the team went further, using deliberately alarming language in the joint Steering and SRC statement. And yet, according to Cosgrove:

We tried to scare people, and it still feels like there are more people using it than there should be. We also got significantly less attention from the media than we expected.

Part of the challenge is that the channels which once connected the Kubernetes community to its users have fragmented. The decline of X (formerly known as Twitter) as a functional platform for technical communities has left a gap that neither LinkedIn, Bluesky, nor Reddit has fully filled:

That community is gone, and we can never have it back. And that's painful, and it sucks, and we have to figure out how to operate differently now.

A broader sustainability question

At its core, this is a sustainability story. Infrastructure at the foundation of an enormous number of production environments, kept alive by a skeleton crew of volunteers working in their spare time. Despite repeated calls for help, the resources never came. A managed shutdown was the only responsible option.

The pattern resonates. As I mentioned earlier this week and again at the KubeCon press lunch, Linux Foundation Executive Director Jim Zemlin announced $12.5 million in grants from Anthropic, AWS, GitHub, Google, Google DeepMind, Microsoft, and OpenAI to help maintainers deal with AI-generated vulnerability reports – a new pressure compounding an existing crisis.

For Cosgrove, though, the overriding feeling is relief:

I'm really happy to see it put to rest. We don't have to fight it anymore. The two maintainers don't have to toil anymore.

The relief is earned. But for thousands of organizations still running ingress nginx in production, the work is just beginning.

My take

The ingress nginx archival matters less for its technical detail than for the organizational reality underneath. This is widely deployed production infrastructure being put down because the economics of open source maintenance failed it.

One of the things I appreciate most about Cosgrove is her honesty about what did not work. The project tried harder than ever to communicate urgency, used deliberately alarming language, and still could not cut through. The fragmentation of technical community channels is not just an inconvenience – it is a governance problem for projects that need to reach users fast.

If you are a Chief Information Officer or Chief Technology Officer and you are not sure whether your clusters run ingress nginx, check today. Run kubectl get pods --all-namespaces --selector app.kubernetes.io/name=ingress-nginx and find out. There is no grace period and no extended support – only a ticking clock and an archived repository.